Imagine sitting in a psychology lab, listening to a heated debate through headphones filled with static. You have a button that turns off the white noise so you can hear the argument more clearly. When would you press it? Most people do so when the speaker supports their views. When the opposing side begins talking, they tend to leave the noise on. This simple action captures one of the mind’s most powerful biases: we literally make belief-confirming information easier to hear.

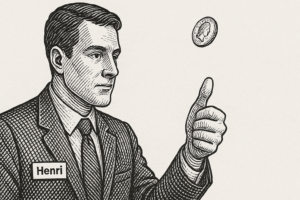

This is confirmation bias, the natural tendency to seek, interpret, and remember information that supports what we already believe. Psychologists Charles Lord, Lee Ross, and Mark Lepper were among the first to show just how deeply this bias shapes our thinking. In their 1979 study, participants listened to recordings of arguments for and against controversial topics like capital punishment. White noise played in the background, and participants could press a button to stop it whenever they chose. The results revealed a striking pattern: people pressed the button more often when hearing arguments that aligned with their own opinions, making those ideas easier to process, and less often when listening to opposing viewpoints. They were, quite literally, turning up the volume on what they already believed.

This phenomenon, called biased assimilation, shows how we unconsciously protect our worldview. Later studies have traced this process inside the brain. Rollwage, Dolan, and Fleming (2020) found that when people feel confident about a belief or decision, neural activity increases in areas associated with reward and certainty whenever they encounter supporting evidence, while contradictory information is discounted. Confirmation bias is not simply stubbornness; it is a built-in efficiency system that preserves coherence at the cost of accuracy.

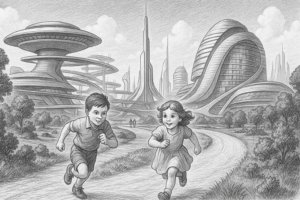

You do not need to be in a psychology lab to see this in action. Social media platforms have turned confirmation bias into an algorithmic feature. Each time you “like” a post, skip a video, or follow an account that aligns with your beliefs, the platform’s artificial intelligence learns what you prefer and feeds you more of it. Over time, this creates an echo chamber that amplifies your perspective while muting others.

Recent studies have also shown that confirmation bias extends to how humans interact with AI. In research on AI-assisted decision-making, participants trusted algorithmic recommendations that matched their own judgments but were skeptical when the AI offered contradictory advice. This bias can be dangerous in fields like healthcare or hiring, where human users might selectively accept AI outputs that confirm their instincts. In essence, both the human and the AI can reinforce each other’s blind spots. Generative models like ChatGPT, while powerful, can also exhibit confirmation bias by mirroring user expectations, providing agreeable answers more often than challenging ones. One recent study looked at issues of religiosity and cultural diversity and found that generative AI not only reflects but amplifies cognitive biases. We end up with a feedback loop where human and machine echo each other’s biases.

This combination of human psychology and algorithmic design reveals a modern version of the white-noise experiment. Instead of static in our headphones, we now have digital filters curating what we see and hear. Each “like,” search, or chat prompt is a decision about what information becomes clearer and what remains muffled. We think we are choosing freely, but often our choices are guided by invisible systems that learn our biases faster than we do.

Psychologists refer to our instinct to defend beliefs that give us identity and coherence motivated reasoning. Listening to the opposition, whether in conversation with your crazy aunt about politics or in an online forum, creates discomfort known as cognitive dissonance. Many of us relieve that discomfort not by questioning ourselves but by pressing the modern “stop” button: unfollowing, muting, or scrolling past. The danger is that it narrows our intellectual world and deepens social divides.

When using AI tools or social media feeds, it helps to deliberately seek out diverse perspectives, as painful as it can be. In the same way researchers design experiments to test opposing hypotheses, we can prompt ourselves to challenge our assumptions. Asking an AI system for counterarguments, alternative evidence, or sources that disagree with a position can reveal how easily both humans and machines slip into patterns of reinforcement.

The classic white-noise study reminds us that confirmation bias is not only about thought but also about perception. We tend to “turn up the volume” on what feels right and tune out what feels wrong. These days, we also do it through clicks, algorithms, and AI chatbots. A challenge is to resist the comfort of that clarity and to remember that truth often lives in the parts we prefer not to hear.